Intelligent Agents are backs

Ray Ozzie: Dawn of a new day

[…] We’re moving toward a world of 1) cloud-based continuous services that connect us all and do our bidding, and 2) appliance-like connected devices enabling us to interact with those cloud-based services.

Continuous services are websites and cloud-based agents that we can rely on for more and more of what we do. On the back end, they possess attributes enabled by our newfound world of cloud computing: They’re always-available and are capable of unbounded scale. They’re constantly assimilating & analyzing data from both our real and online worlds. They’re constantly being refined & improved based on what works, and what doesn’t. By bringing us all together in new ways, they constantly reshape the social fabric underlying our society, organizations and lives. From news & entertainment, to transportation, to commerce, to customer service, we and our businesses and governments are being transformed by this new world of services that we rely on to operate flawlessly, 7×24, behind the scenes. […]

Follow-up: Don Dodge gives its own vision

Yes! Intelligent agents are back. It reminds me my good old days at Nokia Research Center in Boston when I was working on projects involving personal intelligents agents to cope an user when going throuh the scheduling meeting process. Back those agents were supposed to reside on the mobile phone and acting on behalf of its owner with an access to the agenda and making use of AI technique. Working for an handset manufacturer was good enough for a reason to make it run on the handset but in a fully connected world and with all my data including my agenda in the cloud it also makes sense to have those agents running in the cloud. They might still need some presence locally (kinda like a proxy) on the device to be able to interact with all the sensors in the environment for instance.

Yes! Intelligent agents are back. It reminds me my good old days at Nokia Research Center in Boston when I was working on projects involving personal intelligents agents to cope an user when going throuh the scheduling meeting process. Back those agents were supposed to reside on the mobile phone and acting on behalf of its owner with an access to the agenda and making use of AI technique. Working for an handset manufacturer was good enough for a reason to make it run on the handset but in a fully connected world and with all my data including my agenda in the cloud it also makes sense to have those agents running in the cloud. They might still need some presence locally (kinda like a proxy) on the device to be able to interact with all the sensors in the environment for instance.

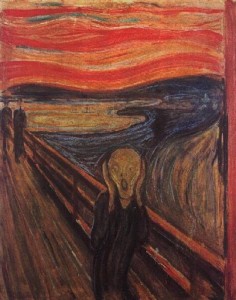

I had also another – very interesting – project related to “affective computing” with a personal agent that will guide me in a museum or an exhibit and take me through some specific item in an exhibit and in a specific order depending on my mood. For instance no need to look at “Cry” if I am already depressed… At the same time a colleague of mine was implementing an agent that would compute crowd movement and take the user in places without

Anyway… interesting development here and I am looking forward to see how this will evolve!